My Facebook feed includes people who are convinced that President Biden is a figurehead, and someone like Vice-President Harris or Ron Klain is running the country. There are others who believe that we are about to be overrun by migrants. Others feel certain that we are edging closer to a socialist state. It also has folks who were convinced that the Supreme Court was going to overturn the election in favor of Donald Trump, who they previously believed was a Russian agent.

In the aftermath of the 2020 election, congressional hearings took place on the spread of misinformation, with some Congressional Democrats arguing to stifle speech they find objectionable. The House is once again grilling Big Tech, bringing Mark Zuckerberg of Facebook, Google CEO Sundar Pichai, and Jack Dorsey of Twitter in for questioning last month. Meanwhile, Republicans co-opt the phrase “cancel culture” to deflect accountability for spreading dangerous falsehoods in the interest of political expediency.

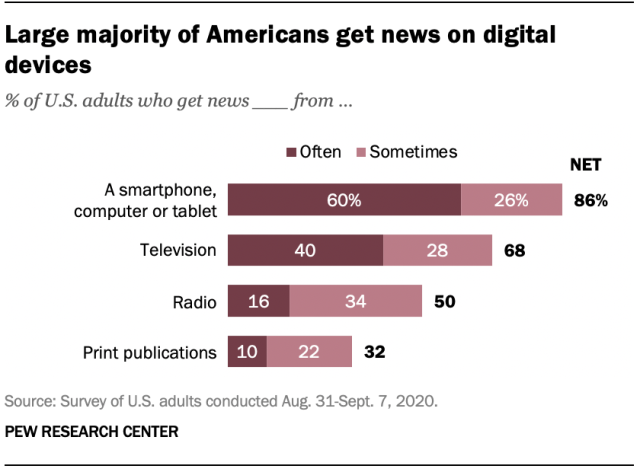

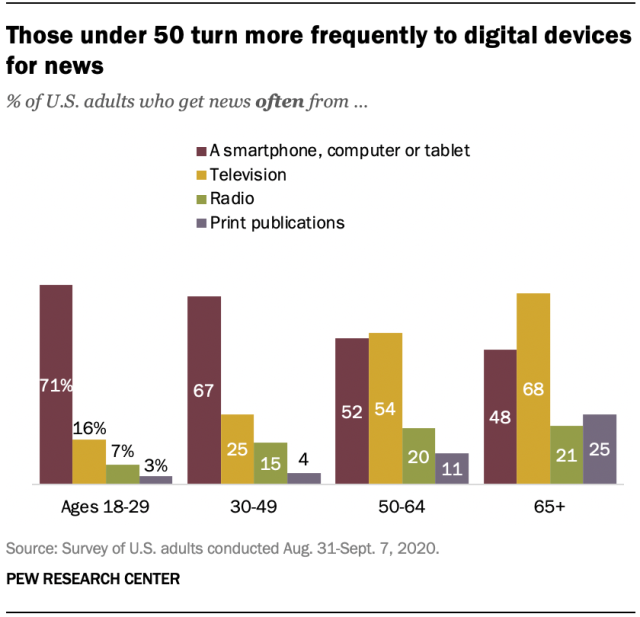

Has the social media echo chamber become so loud that reason is drowned out? A 2020 Pew Research study found that 86% of Americans get news from their smartphone, tablet, or computer “often” or “sometimes”. That number exceeded the totals for TV (68%), radio (50%), and print (32%). Among the 86%, approximately 53% said that they often/sometimes get their news from social media. Social media news consumers tend to be less engaged and knowledgeable than those who primarily use traditional sources. They also tend to be younger; among those aged 30-49, 67% get news from social media often/sometimes, and the number goes up to 71% for ages 18-29, the youngest adults. This is an accelerating trend.

Unlike today, popular media used to have guardrails. In 1949, the early days of broadcasting, long before cable and the internet, broadcasters were obligated to present opinion-based programming in an “honest, equitable and balanced” way as a condition of their licenses. Along with the “equal time” provision, this was called “The Fairness Doctrine”.

The Fairness Doctrine lasted for 46 years, even surviving a Supreme Court challenge in 1969. Nevertheless, in 1985, the FCC under President Reagan decided that the doctrine hurt the public interest and violated free speech. It was formally repealed in 1987, and its removal was integral to the rise of Rush Limbaugh and conservative political talk radio.

As a matter of clarification, the demise of the Fairness Doctrine had nothing to do with the rise of Fox News, MSNBC, other partisan cable outlets, or internet sites. The policy only applied to entities holding broadcast licenses from the government. Cable networks and internet companies are not regulated by the FCC, nor are they required to hold broadcast licenses.

I am not advocating a return to the pre-1985 policy; however, I do believe we need a modern reinterpretation of it for the Internet Age. I argue that uncurated, sparingly fact-checked “news feeds” on social media pose a greater threat to democracy and national comity than the journalistically-bound broadcasters of the 20th century ever did. The government already exerts authority over internet service providers (ISPs) through authority granted by the Communications Act of 1934 and further codified by President Obama’s FCC in 2015. The Fairness Doctrine was never overturned in court, it was repealed by the FCC. Social media platforms that use the regulated ISPs to generate tremendous profits should have an ethical if not legal responsibility to not undermine the republic while doing so.

In short: The American solution to questionable speech should be more speech, not less. Censorship is not just bad policy, but lethal to the marketplace of ideas and anathema to the principles on which our country was founded.

Rather than calling tech CEOs in and threatening their businesses, Congress could work with tech companies to create a system that gives users a fighting chance at developing a balanced, informed viewpoint. As of right now, social media algorithms are designed to construct ideological echo chambers, which is not only damaging to individuals but society at large.

We know that Facebook, for instance, creates algorithms to drive engagement among their users by curating their feeds to deliver posts akin to those they have read and interacted with in the past. Users are also served advertising targeted by age, gender, geography, and preferences gleaned from their online and purchasing behavior. At their worst, these algorithms match vulnerable individuals with dangerous ideologies – such as conspiracy thinking like QAnon or extremist groups like the Proud Boys.

Alternatively, platforms should work with a non-partisan group to create “information offsets” that would be integrated into a user’s feed based on the user’s interactions. If Jane reads a New York Times article about Georgia’s investigation into President Trump’s phone call, perhaps she is fed a New York Post story about Governor Cuomo potentially covering up Covid data. If Joe reads a Fox piece about President Biden mishandling the border, he can be fed a story from Axios about Dominion suing Fox News.

Obviously, the way in which these side-by-side comparisons are made would be subject to debate. Not all stories carry the same weight, and this stem could potentially create an overcorrection that would generate false equivalencies that would further spur ideological divides.

While the proposal would make Facebook or Twitter feeds less fine-tuned to the user’s existing preferences, for the tech companies, the idea of an “honest equitable and balanced” feed may be more appetizing and less intrusive than continued government scrutiny. As a society, maybe we can begin to crack the door to a more informed discourse. There’s a meme floating around that says, “An argument is about who is right while a discussion is about what is right.”

Still, as the saying goes, we can lead the horses to water, but we can’t make them drink. Many of the “information offsets” will go unread. But just as a TV commercial fast-forwarded on your DVR has some value, even the headline of the unread article can create a little dissonance that can combat ideological echo chambers, which would lead to more knowledge and honest discussion. It would cultivate a healthier marketplace of ideas.

Surely the details are devilish. Many good news and opinion sources operate with paywalls, and not all media outlets conduct themselves with the same level of journalistic integrity and objectivity. Regardless of these outlying concerns (which will require creative solutions), we cannot operate as we currently are. We need to start somewhere. Why not social media, the place where our next generation of citizens and leaders are consuming more news every day?

______________________________________________________________________________________________________________________