Headlines

April 5, 2026

Axios

Trump Deadline for Iran Is Monday

Trump's 10-day deadline to Iran is expected to expire on Monday. He previously threatened to bomb the country's energy, water and oil infrastructure if no deal was reached to open the strait.

ESPN

Michigan and UConn Advance to Title Game

The road ends at Lucas Oil Stadium, where UConn and Michigan bested Illinois and Arizona to advance to Monday's national championship. ESPN's college basketball crew was on-site to break down how both of Saturday's Final Four games were won.

NBC News

Polymarket Apologizes for Wagers

Prediction market platform Polymarket issued an apology for allowing users to place bets on the fate of American pilots aboard a U.S. fighter jet downed over Iran.

The New York Times

Bolton: Finish the Job in Iran

"These suggestions may be difficult for Mr. Trump and some of his supporters to swallow. But they come from the need for the United States to secure a strategically critical success in the Gulf — not another humiliating retreat in an increasingly dangerous world."

Mediaite

Poll on Iran’s Uranium Stirs Debate

“Mr. President, you’re going to want to see this result!” Smerconish exclaimed to the camera when coming back from commercial. “You know, oftentimes we get tens of thousands of people voting. It’s not scientific. It’s damn interesting..."

AP News

What Would Aliens Think of Us?

For generations, human beings have wondered: What would alien life from another planet be like? But we rarely ask the opposite: What would they think of us?

The State

Geno Auriemma Apologizes

Auriemma made national news after he confronted Staley in the final seconds of USC’s 62-48 upset win over UConn in the Final Four. Auriemma inferred postgame that he was frustrated at Staley’s lack of a pregame handshake minutes before tipoff.

Barbara Fried

FTX Payouts Near Completion

On March 31, 2026, the FTX Debtors distributed another $2.2 billion to FTX customers. That is on top of $8.1 billion distributed in 2025, yielding total payouts to date of $10.3 billion.

The Washington Post

China Exposes U.S. Forces

The private companies — some with ties to the military — are marketing detailed intelligence on movements of U.S. forces, even as Beijing seeks to keep its distance.

Al Jazeera

US Revokes Soleimani Niece’s Visa

The United States has revoked the permanent residency of two women it says are related to Qassem Soleimani, the late major general who led Iran’s Quds Force, the foreign branch of the Islamic Revolutionary Guard Corps (IRGC), from 1998 until his assassination in 2020.

The Crimson

Harvard Faculty To Vote on A’s

The Harvard Crimson Editorial Board criticizes Harvard's grading policy revisions, arguing that the introduction of a 'SAT+' grade complicates the satisfactory/unsatisfactory system.

USA Today

Tesla Has Thousands of Unsold Cars

Tesla produced 50,363 more electric cars than it was able to sell in the first three months of 2026, even with a slight uptick in EV interest becoming apparent amid rising gas prices.

New York Post

Emanuel Critiques Dem Extremes

Ex-ambassador Rahm Emanuel is now the latest of a growing number of Democrats to blow their tops over their party’s obsession with nutty cultural and identity issues.

CBS Sports

5 Players Who’d Have Been Ineligible

Saturday night's Final Four matchups would have a vastly different flavor if they were contested under provisions outlined in an executive order signed Friday. The order, entitled calls for restricted eligibility windows and transfer limitations.

Politico

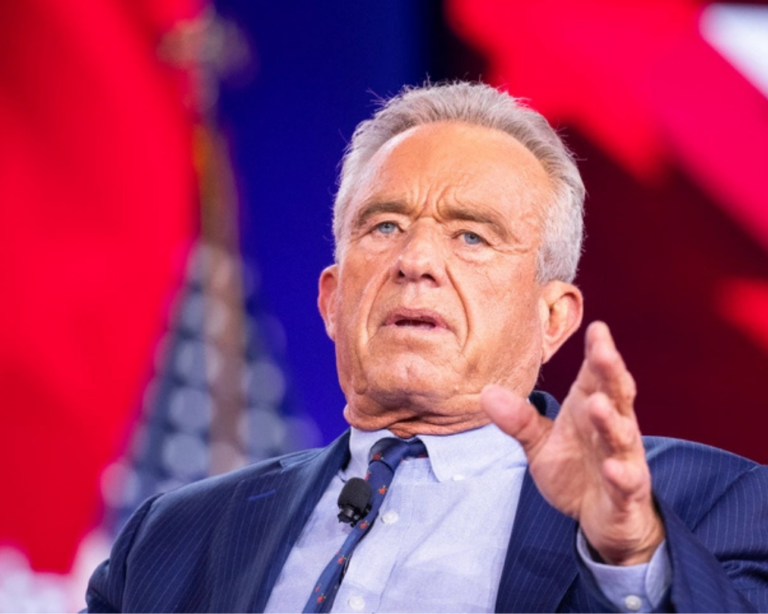

RFK Jr. To Hit the Road for MAHA

Health and Human Services Secretary Robert F. Kennedy Jr. is ramping up midterm travel to revive his “Make America Healthy Again” agenda, armed with a carefully crafted list of popular food and fitness policies to sell.

Fox News

Kim Alexis Questions Body Positivity

As one of the most recognizable supermodels of the 1980s, Kim Alexis knows a thing or two about the pressure of meeting beauty standards, but she also sees a dangerous trend among those who are eschewing those standards completely.

NPR

Artemis Crew Are Quite the Photogs

It has only been a couple days since NASA successfully launched astronauts to the moon for the first time in over half a century. But the Artemis II mission's four-person crew has already delivered striking postcards from their journey.

Sky News

Court Hearing for Ambulance Arson Case

Two young men and a 17-year-old boy have been remanded in custody after appearing in court over an arson attack on Jewish volunteer ambulances in Golders Green. A further arrest in connection with the incident was made at the court.

KTLA

Millennials’ Office Crush Trend

Recently, dating app Hily surveyed 2,000 Gen Z and Millennial Americans to find out how they feel about workplace romance — and found that a majority of both generations think dating someone from work should be normalized more.

For the Left

For the Right

Independent doesn’t mean indecisive.